Share this

Speak the Language: Multi Audio and Language Localisation

by THEOplayer on July 23, 2020

As video streaming rises globally, the challenge is not only to get your content to your viewers’ screens, but also in their language. Only providing your application, audio and subtitles in one language can present a barrier to reach a wider audience, or miss a majority of viewers completely. As a content provider, through Multi Audio and/or Language Localisation, you can give your content the variability it needs to reach audiences who may not speak the language of your content. Giving viewers the ability to change the language of your application, audio and subtitles/Captions instantaneously addresses this. In this blog we discuss the why, how and when of Multi Audio and Language Localisation, as well as how to enable it using our THEOplayer Universal Video Player Solution and its Multi Audio and Localisation features.

When Should You Use Multi Audio?

There are more common use cases for Multi Audio and Language Localisation, such as Video On Demand-based services like Subscription Video On Demand (SVOD) and Advertisement [supported] Video On Demand (AVOD), which allow customers to consume their favourite shows or movies in their native language. Multi Audio can be crucial for some use cases such as international conferences live streamed to multiple countries, shareholder or government meetings with international members, e-learning platforms or live news coverage.

This feature allows your audience to easily tune in, via live stream or VOD, regardless of their location and language capabilities with audio and subtitles in their preferred language. Subtitles are the words that are spoken in audio from a video, but in text form that appears on screen. Captions appear in order to indicate who is speaking (if it isn’t clear) and important noises such as music. This can help viewers better understand and enjoy what they are watching. The change in audio and subtitles is seamless, giving your audience the optimal viewing experience. A larger audience reach also means better monetisation opportunities for content, allowing you to optimise your revenue.

Multi Audio, Language localisation and Subtitles/captions are also opportunities to make your content more accessible for those viewers with disabilities. Accessibility simply means that viewers can perceive, understand, navigate, and engage with video content equally, and without obstacles or barriers, in this case language barriers. Additionally, accessibility can apply to all viewers, regardless of disability or not (i.e. situational limitations, slow internet connection, temporary disabilities, etc.). If you’d like to read more about accessibility for video, check out our blog here.

How Does Multi Audio Work?

In case of a live streaming event there is a camera feed and the primary Audio track that is being transmitted to a central production facility or a truck. At the production center, additional audio commentary, in different languages, is multiplexed onto the transport stream. Increasingly, using some machine learning technology, on the fly subtitles/captions are also added and also multiplexed into the transport stream. This master feed is then delivered to a multi-bitrate encoder and presented to a packager which maps the audio-tracks, subtitles and/or captions onto the streaming protocol's specific constructs. This is then made available to video players via a CDN. Based on the specific settings of the player, either the configured default audio-track and subtitle/captions are presented, or a customer specific variant is used.

For a post-processed workflow like for Video On Demand things are slightly different in the sense that in general a movie is shot in different takes and those are montaged together to create a mezzanine or master format. During post-production, different audio tracks as well as different subtitles are added to the master file. In case of subtitles, this can also be made available as separate subtitle files. This master file is then handed to an offline multi-bitrate transcoder and the transcoded asset is handed [possibly with the separate subtitle files] to a packager who maps the audio tracks, subtitles/captions onto protocol specific constructs and so on.

Streaming protocols allow you to explicitly indicate which is the default audio, subtitle or caption tracks to use. Application developers can override this and select the language based on the language settings of the platform, or the customer can select the specific language themselves.

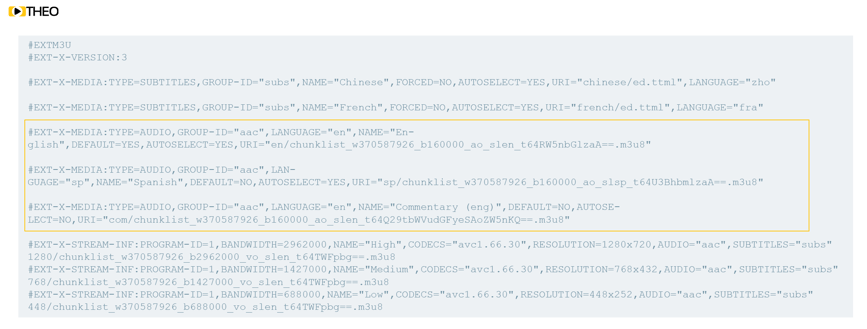

Example of Apple HLS Manifest; Audio Track Section Details

Example of Apple HLS Manifest; Audio Track Section Details

THEOplayer Multi Audio and Platform Support

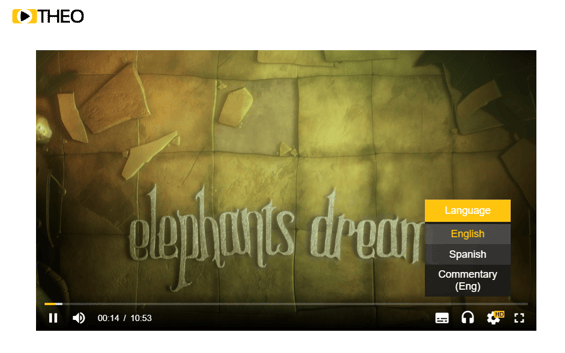

THEOplayer Universal Video Player Solution supports multiple audio and language subtitle/caption tracks in one single video – both for live and on demand streaming. The THEOplayer SDKs come with a default User Interface, which allows users to select a different Audio Language and subtitle on the fly. Equally, our SDKs expose a rich set of APIs to select the audio/subtitles and caption tracks from within the application code.

Besides this capability, we also offer the possibility to “localise” the default THEOplayer User Interface (UI) of SDK. Using this feature, is possible to localize the text based UI helpers messages. Also, all text displayed in the THEOplayer SDK UI can be localised, which ultimately allows your viewers to understand the different options of the player and content’s capabilities visibly within the UI. THEOplayer's localisation solution allows you to automatically adapt the player to different languages.

The THEOplayer Universal Video Player solution ensures that you offer multi-audio, language localisation, captions and subtitles support in a consistent manner across all SDKs ensuring your content reaches your audience on all devices and major browsers including: Chromecast, iOS, Android, Android TV, tvOS (Apple TV), Samsung Tizen, LG webOS, Fire TV, Roku and Google Chrome, Safari, Microsoft Edge and Firefox.

Multi Audio Demo Video with THEOplayer UVP Solution

Check out our Multi Audio demo video below, or check out the full demo here.

You can find our other demos for Language Localisation or Captions and Subtitles in our Demo Zone.

Have questions about multi audio and language localisation for your content and application? Don’t hesitate to contact our experts for personalised advice.

Share this

- THEOplayer (46)

- online streaming (40)

- live streaming (35)

- low latency (32)

- video streaming (32)

- HESP (24)

- HLS (21)

- new features (21)

- THEO Technologies (20)

- SDK (19)

- THEOlive (17)

- best video player (17)

- cross-platform (16)

- html5 player (16)

- LL-HLS (15)

- online video (15)

- SmartTV (12)

- delivering content (12)

- MPEG-DASH (11)

- Tizen (11)

- latency (11)

- partnership (11)

- Samsung (10)

- awards (10)

- content monetisation (10)

- innovation (10)

- Big Screen (9)

- CDN (9)

- High Efficiency Streaming Protocol (9)

- fast zapping (9)

- video codec (9)

- SSAI (8)

- Ultra Low Latency (8)

- WebOS (8)

- advertising (8)

- viewers expercience (8)

- "content delivery" (7)

- Adobe flash (7)

- LG (7)

- Online Advertising (7)

- Streaming Media Readers' Choice Awards (7)

- html5 (7)

- low bandwidth (7)

- Apple (6)

- CMAF (6)

- Efficiency (6)

- Events (6)

- drm (6)

- interactive video (6)

- sports streaming (6)

- video content (6)

- viewer experience (6)

- ABR (5)

- Bandwidth Usage (5)

- Deloitte (5)

- HTTP (5)

- ad revenue (5)

- adaptive bitrate (5)

- nomination (5)

- reduce buffering (5)

- release (5)

- roku (5)

- sports betting (5)

- video monetization (5)

- AV1 (4)

- DVR (4)

- Encoding (4)

- THEO Technologies Partner Success Team (4)

- Update (4)

- case study (4)

- client-side ad insertion (4)

- content encryption (4)

- content protection (4)

- fast 50 (4)

- google (4)

- monetization (4)

- nab show (4)

- streaming media west (4)

- support matrix (4)

- AES-128 (3)

- Chrome (3)

- Cost Efficient (3)

- H.265 (3)

- HESP Alliance (3)

- HEVC (3)

- IBC (3)

- IBC trade show (3)

- React Native SDK (3)

- THEOplayer Partner Success Team (3)

- VMAP (3)

- VOD (3)

- Year Award (3)

- content integration (3)

- customer case (3)

- customise feature (3)

- dynamic ad insertion (3)

- scalable (3)

- server-side ad insertion (3)

- video (3)

- video trends (3)

- webRTC (3)

- "network api" (2)

- Amino Technologies (2)

- Android TV (2)

- CSI Awards (2)

- Encryption (2)

- FireTV (2)

- H.264 (2)

- LHLS (2)

- LL-DASH (2)

- MPEG (2)

- Microsoft Silverlight (2)

- NAB (2)

- OMID (2)

- Press Release (2)

- React Native (2)

- Start-Up Times (2)

- UI (2)

- VAST (2)

- VP9 (2)

- VPAID (2)

- VPAID2.0 (2)

- ad block detection (2)

- ad blocking (2)

- adobe (2)

- ads in HTML5 (2)

- analytics (2)

- android (2)

- captions (2)

- chromecast (2)

- chromecast support (2)

- clipping (2)

- closed captions (2)

- deloitte rising star (2)

- fast500 (2)

- frame accurate clipping (2)

- frame accurate seeking (2)

- metadata (2)

- multiple audio (2)

- playback speed (2)

- plugin-free (2)

- pricing (2)

- seamless transition (2)

- server-side ad replacement (2)

- subtitles (2)

- video publishers (2)

- viewer engagement (2)

- wowza (2)

- "smooth playback" (1)

- 360 Video (1)

- AOM (1)

- API (1)

- BVE (1)

- Best of Show (1)

- CEA-608 (1)

- CEA-708 (1)

- CORS (1)

- DIY (1)

- Edge (1)

- FCC (1)

- HLS stream (1)

- Hudl (1)

- LCEVC (1)

- Microsoft Azure Media Services (1)

- Monoscopic (1)

- NAB Show 2016 (1)

- NPM (1)

- NetOn.Live (1)

- OTT (1)

- Periscope (1)

- Real-time (1)

- SGAI (1)

- SIMID (1)

- Scale Up of the Year award (1)

- Seeking (1)

- Stereoscopic (1)

- Swisscom (1)

- TVB Europe (1)

- Tech Startup Day (1)

- Telenet (1)

- Uncategorized (1)

- University of Manitoba (1)

- User Interface (1)

- VR (1)

- VR180 (1)

- Vivaldi support (1)

- Vualto (1)

- adblock detection (1)

- apple tv (1)

- audio (1)

- autoplay (1)

- cloud (1)

- company news (1)

- facebook html5 (1)

- faster ABR (1)

- fmp4 (1)

- hiring (1)

- iGameMedia (1)

- iOS (1)

- iOS SDK (1)

- iPadOS (1)

- id3 (1)

- language localisation (1)

- micro moments (1)

- mobile ad (1)

- nagasoft (1)

- new web browser (1)

- offline playback (1)

- preloading (1)

- program-date-time (1)

- server-guided ad insertion (1)

- stream problems (1)

- streaming media east (1)

- support organization (1)

- thumbnails (1)

- use case (1)

- video clipping (1)

- video recording (1)

- video trends in 2016 (1)

- visibility (1)

- vulnerabilities (1)

- zero-day exploit (1)

- November 2024 (1)

- August 2024 (1)

- July 2024 (1)

- January 2024 (1)

- December 2023 (2)

- September 2023 (1)

- July 2023 (2)

- June 2023 (1)

- April 2023 (4)

- March 2023 (2)

- December 2022 (1)

- September 2022 (4)

- July 2022 (2)

- June 2022 (3)

- April 2022 (3)

- March 2022 (1)

- February 2022 (1)

- January 2022 (1)

- November 2021 (1)

- October 2021 (3)

- September 2021 (3)

- August 2021 (1)

- July 2021 (1)

- June 2021 (1)

- May 2021 (8)

- April 2021 (4)

- March 2021 (6)

- February 2021 (10)

- January 2021 (4)

- December 2020 (1)

- November 2020 (1)

- October 2020 (1)

- September 2020 (3)

- August 2020 (1)

- July 2020 (3)

- June 2020 (3)

- May 2020 (1)

- April 2020 (3)

- March 2020 (4)

- February 2020 (1)

- January 2020 (3)

- December 2019 (4)

- November 2019 (4)

- October 2019 (1)

- September 2019 (4)

- August 2019 (2)

- June 2019 (1)

- December 2018 (1)

- November 2018 (3)

- October 2018 (1)

- August 2018 (4)

- July 2018 (2)

- June 2018 (2)

- April 2018 (1)

- March 2018 (3)

- February 2018 (2)

- January 2018 (2)

- December 2017 (1)

- November 2017 (1)

- October 2017 (1)

- September 2017 (2)

- August 2017 (3)

- May 2017 (3)

- April 2017 (1)

- March 2017 (1)

- February 2017 (1)

- December 2016 (1)

- November 2016 (3)

- October 2016 (2)

- September 2016 (4)

- August 2016 (3)

- July 2016 (1)

- May 2016 (2)

- April 2016 (4)

- March 2016 (2)

- February 2016 (4)

- January 2016 (2)

- December 2015 (1)

- November 2015 (2)

- October 2015 (5)

- August 2015 (3)

- July 2015 (1)

- May 2015 (1)

- March 2015 (2)

- January 2015 (2)

- September 2014 (1)

- August 2014 (1)